Self-Hosted Voice Assistant With Home Assistant: The Complete 2026 Guide to Ditching Alexa

Self-Hosted Voice Assistant With Home Assistant: The Complete 2026 Guide to Ditching Alexa

Last month, my Alexa interrupted a timer notification to recommend I buy a new air fryer. That was it for me.

I'd been tolerating the slow creep of ads, the "by the way" suggestions, the growing unease of knowing every voice command I'd ever uttered was sitting on Amazon's servers. But when a device I paid for started pitching me products mid-interaction, I decided to build something better. Something that listens only when I want it to, processes everything locally, and doesn't have a business model built on monetizing my voice data.

That something is Home Assistant's Assist. I've been running it for several months across my entire home now, and I'm done hedging: the self-hosted voice assistant is no longer a hobby project. It's a genuine replacement.

Why 2026 Is the Year to Make the Switch

The timing isn't a coincidence. A few things happened at once.

The commercial alternatives are getting worse. Not stagnating. Worse. Amazon's Alexa has been layering in generative AI features that most users didn't ask for, and the subscription model they'd been hinting at for years is now reality. Google Assistant has been quietly deprioritized in favor of Gemini integrations that feel half-baked for smart home control. If you've noticed your Google Home responding slower or less accurately lately, you're not imagining it.

Meanwhile, the open-source tooling has gotten genuinely good. Home Assistant's "Year of the Voice" initiative, led by founder Paulus Schoutsen, set an ambitious goal: let users control their smart homes in their own language, locally and privately. It delivered. The voice pipeline supports 51 languages for voice commands and 22 for wake words. That's not a toy.

And the hardware caught up. You no longer need a beefy server to run speech-to-text locally. A Raspberry Pi 4 can handle basic Whisper inference, and a modest mini PC with a mid-range GPU delivers response times that rival cloud-based assistants. Having spent time working with local LLM setups and the hardware trade-offs involved, I can tell you the barrier to entry has never been lower.

The Architecture: How Home Assistant Voice Actually Works

Before you start buying hardware, understand what you're building. Home Assistant's voice pipeline is modular. That's its greatest strength and the thing that intimidates newcomers.

Three core stages:

- Speech-to-Text (STT): Your voice gets converted to text. The local option is Whisper, OpenAI's open-source speech recognition model. Runs entirely on your hardware.

- Intent Recognition: The text is parsed to figure out what you want. Home Assistant's built-in intent system handles standard commands ("turn off the kitchen lights"), while an optional local LLM can handle more complex, conversational stuff.

- Text-to-Speech (TTS): The response is spoken back to you. Piper is the open-source TTS engine, and it sounds surprisingly natural for something running on a $50 board.

Every component can be swapped independently. Run Whisper locally but use Home Assistant Cloud for TTS if you want. Go fully local for everything. Plug in a local LLM like Llama for the intent layer while keeping the rest simple. Your call.

The right architecture isn't the most complex one. It's the one you'll actually maintain six months from now.

For most people, I recommend starting fully local with Whisper + Home Assistant's built-in intent system + Piper. No LLM needed. This covers 90% of smart home voice commands and is dead simple to maintain. Add an LLM layer later if you want it.

Hardware: What You Actually Need (and What You Don't)

This is where most guides lose the plot, recommending $2,000 GPU setups for what is essentially a light switch controller. Let me break this into tiers based on what I've tested and what community members like Nicolas Mowen (crzynik) have documented.

The Starter Setup (~$100-150)

- Server: Raspberry Pi 4 (4GB) or an old laptop collecting dust in your closet

- Satellite: Your phone (Home Assistant Companion app) or a $13 ESP32-S3 Box

- What you get: Basic voice commands with 3-5 second response times. Perfectly usable for lights, switches, and simple automations.

Start here. Don't overthink it. Get the pipeline working, live with it for a week, then decide if you want to invest more.

The Sweet Spot (~$300-500)

- Server: A used mini PC (Intel N100 or similar) with 16GB RAM

- Satellites: Home Assistant Voice Preview Edition or custom ESP32-based devices in each room

- What you get: 1-3 second response times with Whisper medium model. Fast enough that you don't feel like you're waiting.

This is what I run. The mini PC handles Whisper and Piper without breaking a sweat, and the ESP32 satellites give me whole-home coverage. The Voice Preview Edition hardware is purpose-built for this, with a solid microphone array and speaker.

The Enthusiast Tier (~$800+)

- Server: Mini PC with eGPU enclosure + dedicated GPU (RTX 3090, RX 7900XTX)

- Satellites: Multiple Voice PE units or custom builds

- LLM: Local model via llama.cpp for conversational AI

Nicolas Mowen's community post documents this tier extensively. He tested everything from an RTX 3050 to a 3090, and the results are impressive. With a 24GB GPU running a 20-30B parameter mixture-of-experts model, you get 1-2 second response times that are genuinely competitive with cloud assistants. If you've been exploring the differences between AMD ROCm and CUDA for local AI workloads, both ecosystems work well here. Mowen confirmed comparable 1-2 second response times on both the RTX 3090 and RX 7900XTX.

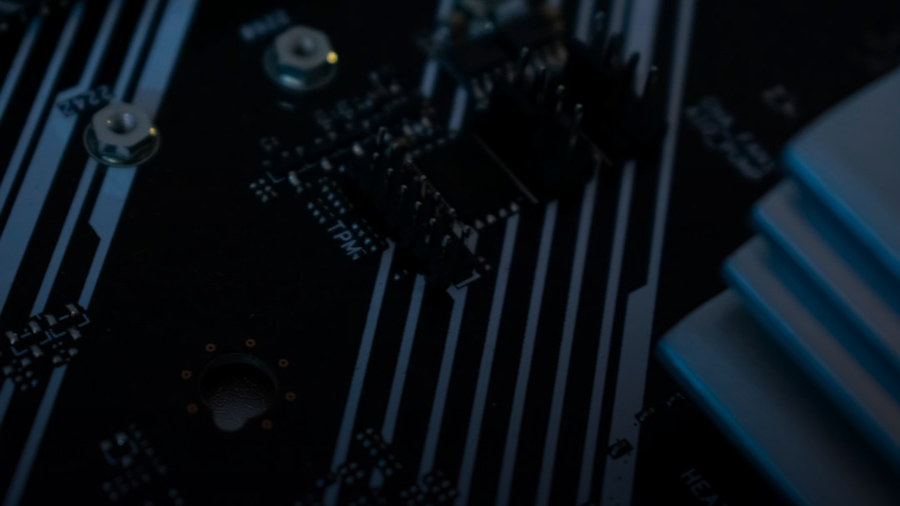

Here's a walkthrough of the Voice Preview Edition hardware and initial setup:

Setting It Up: The Practical Walkthrough

I'm not writing a step-by-step installation guide. Home Assistant's official docs do that well, and they change with every release. What I will do is tell you the things the documentation doesn't emphasize enough.

Start with Home Assistant OS, not Docker. I know. If you're a senior engineer, your instinct is to run everything in containers on your existing infrastructure. I've shipped enough container orchestration to understand that impulse. Resist it here. Home Assistant OS handles add-on management, audio routing, and device discovery in ways that save you hours of debugging. You can always migrate later.

Install three add-ons: Whisper, Piper, and openWakeWord. That's it for a fully local pipeline. Whisper handles speech-to-text, Piper handles text-to-speech, openWakeWord listens for your trigger phrase. All three install with one click from the add-on store.

Configure your voice pipeline. Settings → Voice Assistants → Add Assistant. Select your local Whisper instance for STT, your local Piper instance for TTS, and Home Assistant for conversation (the built-in intent engine). Pick a wake word. "Hey Jarvis" and "Hey Nabu" work out of the box.

Expose your entities. This is the step everyone forgets. Home Assistant won't let Assist control devices unless you explicitly expose them. Go to Settings → Voice Assistants → Expose and toggle on the devices you want voice control over. Name them clearly. "Kitchen overhead light" will be recognized more reliably than "Light 7."

Test with your phone first. Open the Companion app, tap the Assist icon in the top right, try some commands. Get this working before you add satellite hardware. Debugging a voice pipeline is much easier when you can eliminate the microphone as a variable.

Then add satellites. Once the pipeline works on your phone, adding ESP32-based satellites or a Voice Preview Edition to different rooms is straightforward. Each satellite connects to your Home Assistant instance and uses the same pipeline you already configured.

The Honest Trade-offs

I'm not going to pretend this is a zero-compromise Alexa replacement. It isn't. Here's what you should know going in.

Music and media playback is harder. Alexa and Google have deep integrations with Spotify, Apple Music, and their own streaming services. Home Assistant can control media players, but asking it to "play jazz in the kitchen" requires more setup than you'd expect. The robot vacuum smart home security piece I wrote touched on how deeply these ecosystems embed themselves. Breaking free has real costs.

The intent system is literal. If you say "make it warmer in here," Alexa knows you mean the thermostat. Home Assistant's built-in intent system needs precision: "set the living room thermostat to 72." Adding a local LLM fixes this, but that bumps up your hardware requirements.

Wake word detection isn't perfect. OpenWakeWord is good and getting better, but it has more false positives and negatives than the heavily optimized, cloud-trained models from Amazon and Google. In my experience, it works well in quiet rooms and struggles with background noise. TV on in the other room? Expect some missed wake words.

Initial setup takes a Saturday. Not a weekend. Not a week. One focused Saturday afternoon to get the basic pipeline running with a phone as your input device. Add another hour or two per satellite. This is way better than it was even a year ago.

But here's the thing nobody's saying about these trade-offs: they're all improving on a monthly cadence, while the commercial alternatives are getting worse on the same cadence. That trend line matters more than any point-in-time comparison.

The Privacy Argument Is Stronger Than You Think

When I tell other engineers I run a self-hosted voice assistant, the first question is always about capability. "Can it do X? Can it do Y?" Wrong question. The right question is: "Why am I sending a continuous audio stream from my bedroom to Amazon's servers?"

With a fully local setup, your voice data never leaves your network. No recording history on a corporate server. No employee listening to your commands for "quality improvement." No terms-of-service update that quietly expands what they can do with your data.

We wouldn't ship a production system that streamed all user data to a third party with no audit trail and a ToS that lets them use it for training ML models. So why do we accept exactly that in our living rooms?

What Comes Next

Local speech-to-text models are getting smaller and faster. Local LLMs are becoming practical on consumer hardware. Home Assistant's community is one of the most active open-source ecosystems I've seen, with new voice features landing in nearly every monthly release.

My prediction: within 12 months, the average Home Assistant voice setup will match the core smart home capabilities of Alexa and Google Assistant for lights, climate, locks, and routines. It won't match them for shopping or music discovery. But for the thing a voice assistant in your home should actually do — control your home — it'll be there. Probably past it.

If you're a privacy-conscious engineer who's been waiting for self-hosted voice to be "ready enough," stop waiting. Start with a phone and the Whisper add-on this weekend. You'll be surprised how quickly you stop missing Alexa. And how good it feels to know that when you say "turn off the lights," the only thing listening is hardware you own, running software you control.

Photo by Mark Bishop on Unsplash.